LLMs Are Practically ADHD

I was diagnosed with ADHD at 41. After decades of fighting a brain that loses context, drops threads, and can’t sustain rigid routines, I finally had a name for it. Medication addressed the neurochemistry — it made it possible to focus. But focus on what? The structural problems remained. Where did I leave off? What notebook had those notes? What was the context three weeks ago?

Then I started building with AI agents daily. Memory systems. Session continuity architectures. Governance patterns that keep agents reliable across restarts and context window compactions.

And one day the pattern clicked: LLMs are practically ADHD. No wonder we mesh.

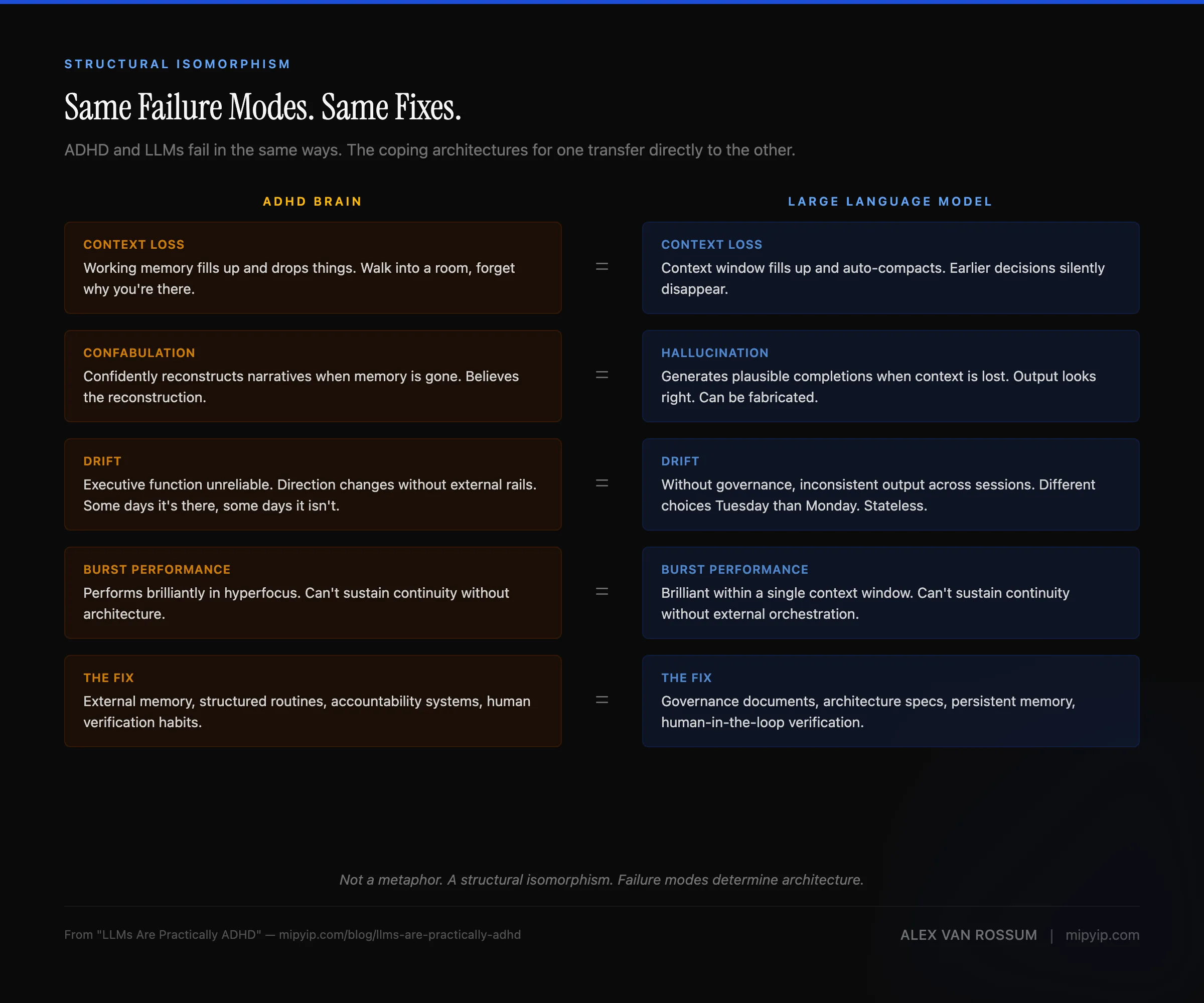

That’s not a punchline. It’s a structural observation that changed how I think about both systems. If you’re deploying AI agents that drift, confabulate, and lose context between sessions — the failure modes are the same, and so are the fixes.

The parallel

| ADHD Brain | Large Language Model |

|---|---|

| Loses context when working memory fills up | Loses context when the context window fills up |

| Can’t reliably remember three weeks ago | Can’t reliably remember three sessions ago |

| Needs external systems to maintain state | Needs external systems to maintain state |

| Performs brilliantly in hyperfocus bursts | Performs brilliantly within a single context window |

| Can’t sustain continuity without architecture | Can’t sustain continuity without architecture |

| Confidently reconstructs narratives when memory is gone | Confidently confabulates when context is lost |

| Needs governance rails or it drifts | Needs governance rails or it drifts |

| Executive function requires external scaffolding | Reliable execution requires external orchestration |

Every row in that table describes the same underlying failure mode expressed in two different systems. Not a metaphor. A structural isomorphism.

Context loss is context loss

The most direct parallel is context loss. My brain has a working memory buffer that fills up and drops things. An LLM has a context window that fills up and drops things. The mechanism is different — neurochemistry vs tokenization — but the failure mode is identical: once the buffer is full, earlier context falls off a cliff.

For ADHD, this means walking into a room and forgetting why I’m there. For an LLM, this means auto-compaction silently discarding the session context that made the agent productive in the first place. Same problem. Same consequence: the system keeps running, but it’s running on incomplete information and doesn’t know what it’s lost.

The solution is the same too. External memory. You can’t expand the buffer, so you externalize what matters into a system the buffer can reference. For me, that’s structured notes, agent-maintained context files, and tools that hold what my brain can’t. For LLMs, it’s governance documents, architecture specs, and persistent memory tiers that survive compaction.

Confabulation

This one is uncomfortable to admit. ADHD brains don’t just forget — they reconstruct. Inattention and working-memory deficits disrupt how experiences are encoded, so events get badly recorded or never make it into long-term memory at all. When those gaps exist, the brain fills them in with plausible narratives — a process called confabulation. Confidently. You’re not lying. You genuinely believe the reconstructed version. Adults with ADHD produce more false memories than controls, hold them with stronger confidence, and show more knowledge corruption over time. You’ll argue for it. And you’ll be wrong.

I figured this out about myself maybe twenty years ago — the hard way, obviously — and started building countermeasures. Notebooks first, then Obsidian, then Notion. External records I could check when my brain produced a memory that felt a little too clean, a little too convenient. Did that actually happen, or did I just construct the most plausible version? became a reflex. The answer was uncomfortably often the latter. But the habit of checking — of never fully trusting unverified recall — turned out to be the important part.

LLMs do the same thing. When context is lost, they generate plausible completions. Confidently. They’re not “lying” — they’re doing what they do: producing the most statistically likely continuation of whatever context remains. Their training objective optimizes for fluency and likelihood, not truth. The output looks right. It reads right. And it can be completely fabricated.

The failure mode isn’t ignorance. It’s confident ignorance. Both systems produce their best work indistinguishably from their worst work if you’re not checking. The governance pattern is the same: trust but verify, and build systems that make verification the default rather than the exception. My notebooks and Notion databases are the same class of solution as the memory frameworks and external state files I build for AI agents — external verification systems that catch confabulation before it compounds.

Governance rails or drift

Left to my own devices, without external structure, I drift. Not because I’m lazy — because that’s how ADHD works. The executive function that sustains long-term direction, that remembers priorities when something shiny appears, that maintains consistency across days and weeks — it’s unreliable. Some days it’s there. Some days it isn’t. You can’t build a system on “some days.”

LLMs drift the same way. Without governance documents, without architectural constraints, without clear boundaries, they produce inconsistent output across sessions. They make different architectural choices on Tuesday than they made on Monday. They rename things. They reorganize structures. They solve the same problem three different ways in the same codebase. Not because they’re bad at their job — because they’re stateless. Every session starts from zero, and without external rails, zero doesn’t have a direction.

The solution, again, is the same class of solution. External governance that persists across sessions. For me, it’s structured routines, external systems, and yes — AI agents that maintain context on my behalf. For LLMs, it’s architecture documents, governance files, and working memory systems that survive context window resets.

Wait, haven’t I solved this before?

The problems I’ve been solving for my own cognition for years — the coping mechanisms, the external memory systems, the “don’t trust your first instinct, check the system” habits — map directly to enterprise AI deployment problems. I didn’t see that coming.

| Personal Problem | Enterprise Problem |

|---|---|

| Context lost between sessions | AI agents lose state across conversations |

| Notes from three weeks ago are useless | Institutional knowledge doesn’t survive team turnover |

| Rigid daily routines fail | Workflow compliance drops after initial adoption |

| Need external systems to hold what the brain can’t | Need external state management for AI context windows |

| Governance to prevent the agent from losing the plot | Governance to prevent AI hallucination and drift |

| Human-in-the-loop by necessity (can’t trust unreviewed output) | Human-in-the-loop by policy (enterprise AI governance requirements) |

I didn’t learn these patterns from a whitepaper. I learned them because my brain demanded them.

The external memory systems I built to maintain context across life sessions are the same class of system that enterprises need to maintain AI agent state across conversations. The governance structures I use to keep myself from drifting are the same class of structure that organizations need to keep AI output reliable. The human-in-the-loop habit I developed because I can’t fully trust my own unverified recall is the same class of pattern that enterprise AI governance requires by policy.

The architecture that works for both

Four patterns keep showing up, whether I’m designing systems for my own cognition or for AI agents:

External memory. The brain forgets. The context window compacts. Neither system can be trusted to hold critical state internally. So you externalize it — into documents, into databases, into structured files that the system can reference when it needs context it can no longer hold.

Session continuity. Whether it’s a new day for the brain or a new context window for the agent, the system needs to pick up where it left off. That means writing down what happened, what matters, and what comes next — before the session ends. Not as a nice-to-have. As a prerequisite for the next session being productive.

Conversation-driven data capture. Compliance-based logging fails. It fails for ADHD brains because the executive function required to maintain the habit is exactly the executive function that’s impaired. It fails for AI systems because rigid data entry workflows have the same adoption cliff that rigid routines have for humans. The alternative: systems that generate their own data through natural interaction. You don’t fill out a form. You have a conversation, and the system captures what matters.

Governance that survives restarts. Every morning, my brain reboots. Every new context window, the agent reboots. Governance can’t live in the session — it has to live outside the session, in structures the system reads on startup. The rules persist even when the state doesn’t.

These aren’t ADHD coping mechanisms repurposed for AI. They’re solutions to a class of architectural problem: how do you get reliable, consistent output from a stateless system over time? (And when you have multiple agents? The problem multiplies — which is why separation of concerns matters as much for AI agents as it does for human teams.)

What that looks like in practice:

| Pattern | ADHD Implementation | AI Implementation |

|---|---|---|

| External memory | Notion databases, structured notes, journals | Vector stores, governance docs, CLAUDE.md files |

| Session continuity | Morning review rituals, handoff notes to future self | Run logs, session memory directories, handoff protocols |

| Conversation-driven capture | Voice memos, chat-based logging with life-bot | Agent-generated context files, conversational data entry |

| Governance that survives restarts | Daily routines, external checklists, accountability systems | Architecture documents, config files read on init |

This isn’t a metaphor

ADHD and LLMs are obviously different systems. One is neurobiological. The other is statistical. I’m not saying they’re the same thing.

But they fail in the same ways. They lose context. They confabulate. They drift without rails. They perform brilliantly in bursts but can’t sustain direction without external architecture. And the solutions that work for one — external memory, session continuity, governance, human-in-the-loop verification — work for the other. Not because the systems are similar. Because the failure modes are similar, and failure modes determine architecture.

I’ve been solving context loss, state management, and continuity across interruptions since before LLMs existed. I just didn’t know the same patterns would transfer so directly. The full methodology is cognitive offloading — deliberately choosing what stays in your head and building systems to handle the rest.

That’s not a coincidence. It’s structural. And it suggests something worth paying attention to: the emerging field of AI governance might have more to learn from decades of ADHD research and accommodation design than anyone currently realizes. Both fields are trying to answer the same question — how do you build reliable systems around an engine that’s powerful but inconsistent?

The ADHD community has been working on that question for a lot longer than the AI community has.

I build tools around these patterns: Actions externalizes command memory so you don’t have to hold it. Panoptisana cuts Asana’s noise down to a flat, searchable list. Both are designed for the same constraint — a powerful engine that can’t hold its own state.

ADHD sources: ADHD Can Trip Up Memories — CHADD · False Memory in Adults With ADHD — Journal of Attention Disorders · Confabulation — StatPearls / NIH · The False Memory Syndrome — BMC Psychiatry · How ADHD Impacts Long-Term Goal Setting — Relational Psych · ADHD Executive Dysfunction — ADDitude · Working Memory Powers Executive Function — ADDitude · ADHD and Executive Dysfunction — Drake Institute · Externalizing Executive Functioning — Courage to Be Therapy · Guide to Journaling for ADHD — Reflection

LLM and AI agent sources: Why Language Models Hallucinate — OpenAI · Survey and Analysis of Hallucinations in Large Language Models — PMC / NIH · Large Language Models Hallucination: A Comprehensive Survey — arXiv · Why Statefulness Matters — Letta · Reducing LLM Hallucinations — Zep · How Does LLM Memory Work? — DataCamp · Building Stateful Continuity in Stateless LLM Services — Hoomanely · Conversation-as-a-Database — Rachael Annabelle Yong

Or get new posts in your inbox

Occasional writing on systems, ADHD, and AI. No cadence pressure.

You're in. I'll send you the next one.